Modern businesses run on data, but data rarely flows in a straight, predictable line. It moves through ingestion layers, transformation jobs, orchestration tools, warehouses, dashboards, and machine learning models. At every step, something can break. A delayed batch job, a schema change, or a silent data quality issue can ripple across systems and disrupt decision-making. That’s why data pipeline monitoring tools have become mission-critical for data teams determined to prevent failures before they escalate.

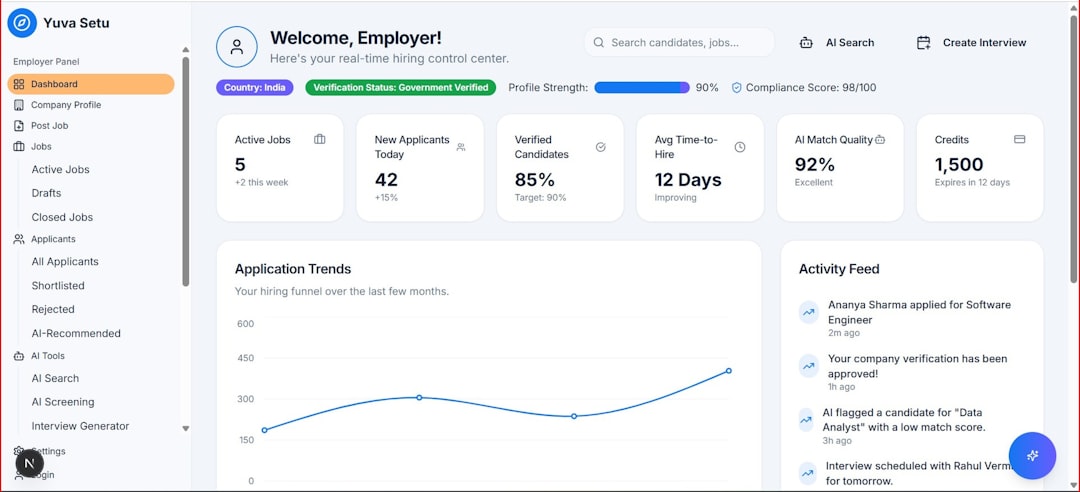

TLDR: Data pipeline monitoring tools help teams detect outages, schema changes, performance bottlenecks, and data quality issues before they impact business operations. The best tools combine observability, alerting, data lineage, and anomaly detection in one place. In this guide, we explore seven powerful data pipeline monitoring solutions, what makes each unique, and how to choose the right one for your environment. A comparison table at the end helps you evaluate them side by side.

Without observability, data pipelines are a black box. A dashboard may show incorrect numbers, but the root cause could lie anywhere from ingestion to transformation. Effective monitoring tools shine a light on these hidden processes, giving engineers and analysts actionable insights.

What to Look for in a Data Pipeline Monitoring Tool

Before diving into specific tools, it’s important to understand the core capabilities that make a monitoring solution effective:

- Real-time alerting: Immediate notification when jobs fail, slow down, or generate anomalies.

- Data quality checks: Automated validation of missing values, duplicate records, or unusual patterns.

- Schema change detection: Alerts when upstream systems modify data structures.

- Data lineage: Clear visualization of upstream and downstream dependencies.

- Anomaly detection: Statistical or machine learning-based detection of unusual behavior.

- Integration flexibility: Compatibility with orchestration tools, warehouses, and ETL platforms.

With those criteria in mind, here are seven data pipeline monitoring tools that stand out.

1. Monte Carlo

Best for end-to-end data observability at scale.

Monte Carlo is one of the pioneers in the data observability space. It focuses on minimizing data downtime by proactively identifying issues before they surface in analytics dashboards.

Key features:

- Automated anomaly detection

- Freshness and volume monitoring

- Schema change tracking

- Field-level lineage

Monte Carlo’s strength lies in its ability to detect subtle shifts in data distribution that may indicate hidden issues. For large enterprises with complex, multi-layer data stacks, it provides a comprehensive safety net.

2. Datadog

Best for teams already using infrastructure monitoring.

While Datadog is traditionally known for infrastructure and application monitoring, it also offers powerful data pipeline monitoring capabilities. By integrating logs, metrics, and traces, Datadog provides full-stack visibility.

Why it stands out:

- Integrated monitoring across cloud services

- Custom dashboards

- Rich alerting mechanisms

- Extensive integrations

If your pipelines are tightly coupled with cloud workloads, Datadog offers a centralized monitoring experience rather than adding another standalone solution.

3. Great Expectations

Best for open-source data quality validation.

Great Expectations focuses specifically on data quality testing. It allows teams to define expectations—rules about what data should look like—and validate datasets against those rules.

Key benefits:

- Open-source and highly customizable

- Strong documentation features

- Integration with orchestration tools

- Data validation during pipeline execution

Although it is not a full observability platform by itself, Great Expectations excels as a core component of a broader monitoring strategy.

4. Soda

Best for automated data quality monitoring.

Soda is designed to continuously monitor datasets for quality issues. It enables users to define checks either in code or via a user interface, making it accessible to both engineers and analysts.

Notable features:

- Data contracts and validation rules

- Automated anomaly detection

- Cross-platform compatibility

- Cloud and open-source options

Soda works especially well in environments where data reliability is mission-critical, such as finance or healthcare analytics.

5. Bigeye

Best for warehouse-native monitoring.

Bigeye focuses on monitoring data directly in the warehouse, which reduces the complexity of external integrations. It uses machine learning to baseline normal behavior and detect deviations.

Highlights:

- Schema and freshness tracking

- Machine learning-based anomaly detection

- Data lineage mapping

- Column-level metrics

For teams heavily invested in cloud data warehouses, Bigeye provides targeted monitoring without requiring a full observability overhaul.

6. Apache Airflow Monitoring (with OpenLineage)

Best for orchestration-centric teams.

Apache Airflow is a leading orchestration tool, and when paired with monitoring integrations such as OpenLineage, it offers powerful tracking capabilities.

Advantages:

- Detailed task-level monitoring

- Workflow visualization

- Open-source flexibility

- Extensive plugin ecosystem

Airflow alone provides task failure alerts and scheduling insights, but enhanced with lineage tools, it gives clearer visibility into pipeline dependencies.

7. New Relic

Best for unified application and data monitoring.

New Relic extends beyond application performance monitoring into data pipeline visibility. It enables teams to track metrics across services and correlate infrastructure events with pipeline issues.

Key strengths:

- Custom event tracking

- Cross-service observability

- Advanced alert configuration

- Scalable dashboards

If your goal is end-to-end observability across applications and data flows, New Relic offers a cohesive solution.

Comparison Chart

| Tool | Primary Focus | Open Source | Anomaly Detection | Data Lineage | Best For |

|---|---|---|---|---|---|

| Monte Carlo | Data observability | No | Yes | Yes | Enterprise data teams |

| Datadog | Infrastructure and data monitoring | No | Yes | Limited | Cloud-centric teams |

| Great Expectations | Data quality validation | Yes | Rule-based | No | Custom validation setups |

| Soda | Data quality monitoring | Partial | Yes | Limited | Continuous data checks |

| Bigeye | Warehouse monitoring | No | Yes | Yes | Warehouse-native teams |

| Airflow + OpenLineage | Pipeline orchestration visibility | Yes | No | Yes | Orchestration-driven workflows |

| New Relic | Unified observability | No | Yes | Limited | Full-stack monitoring |

How to Choose the Right Tool

The right monitoring solution depends largely on your architecture and maturity stage. Consider these questions:

- Is your main problem data quality or infrastructure reliability?

- Do you need deep lineage visibility?

- Are you operating in a regulated industry?

- How large and complex is your data ecosystem?

Startups may prioritize lightweight, open-source tools that integrate quickly. Large enterprises often require enterprise-grade observability platforms with advanced anomaly detection and lineage mapping.

Also factor in alert fatigue. A tool that sends too many low-value alerts can overwhelm engineers and cause critical issues to be overlooked. Intelligent anomaly detection and customizable notification settings are essential.

Preventing Failures Before They Happen

Data failures are rarely dramatic explosions—they’re subtle inaccuracies, delayed updates, or silent schema mismatches that slowly erode trust. The longer they go unnoticed, the more costly they become.

By investing in a robust data pipeline monitoring tool, you create:

- Faster root cause analysis

- Improved data trust

- Reduced downtime

- Greater operational transparency

In today’s analytics-driven landscape, reliable data is not optional. It is the backbone of forecasting, product decisions, and customer insights. Monitoring tools act as guardrails, ensuring that your data pipelines remain stable, observable, and resilient.

As data ecosystems continue to grow in complexity, proactive monitoring will only become more important. Choosing the right combination of observability, validation, and alerting tools today can save countless hours of firefighting tomorrow—and keep your data flowing smoothly when it matters most.

logo

logo